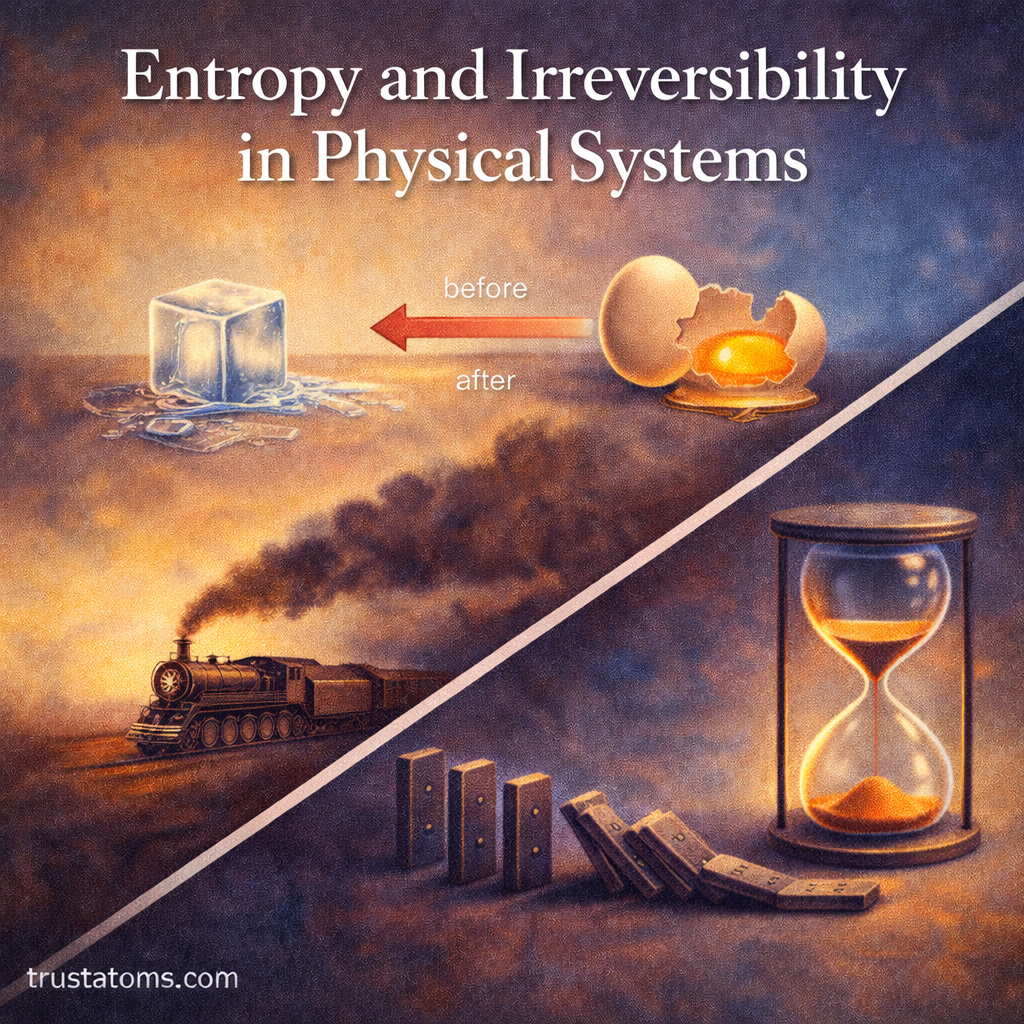

Entropy is one of the most profound concepts in physics. It explains why heat flows from hot to cold, why ice melts in warm air, and why certain processes in nature can never be reversed.

At its core, entropy measures the tendency of physical systems to evolve toward disorder — or more precisely, toward the most statistically probable state.

This article explores entropy, irreversibility, and their role in physical systems, from thermodynamics to cosmology.

What Is Entropy?

Entropy is a thermodynamic quantity that measures the degree of randomness or the number of possible microscopic arrangements in a system.

In simple terms:

- Low entropy = more order

- High entropy = more disorder

- Systems naturally evolve toward higher entropy

Entropy helps explain the direction of spontaneous processes.

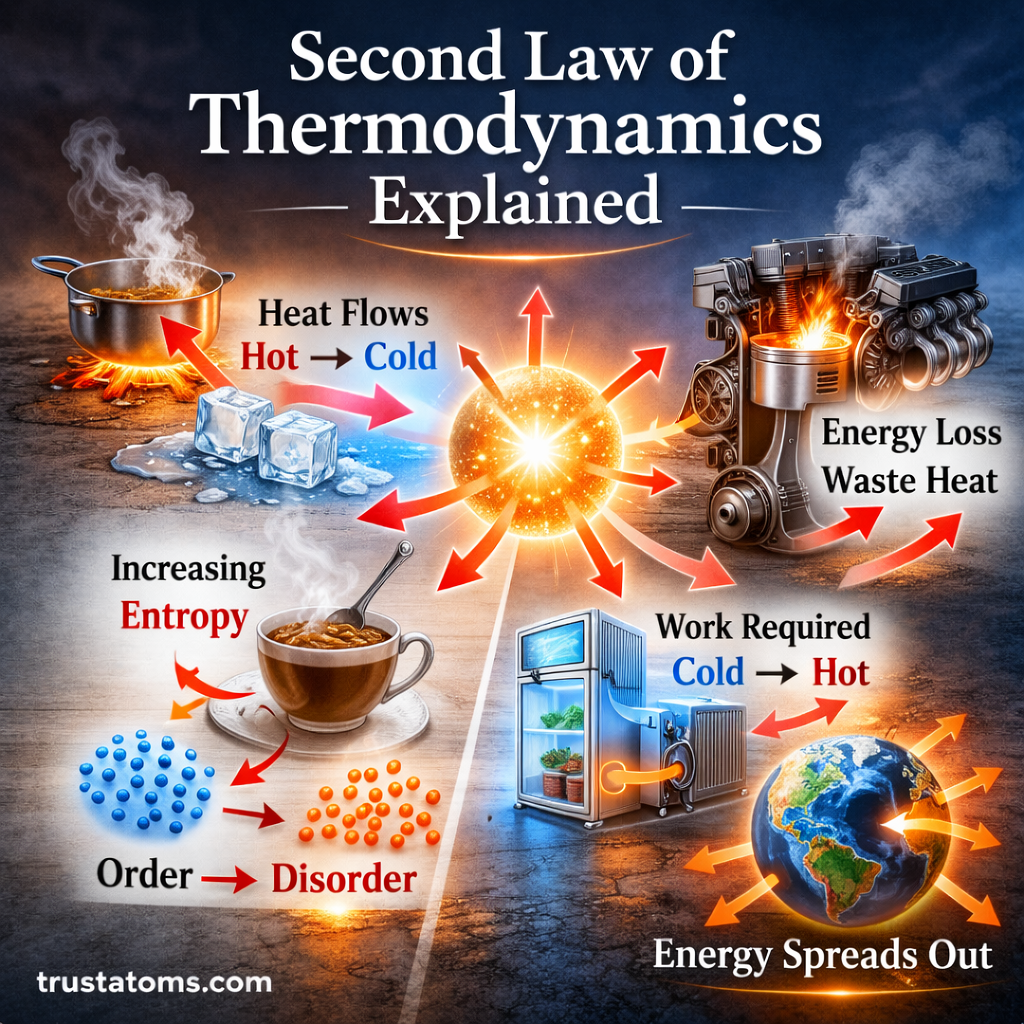

The Second Law of Thermodynamics

The concept of irreversibility comes from the Second Law of Thermodynamics.

It states:

In an isolated system, total entropy never decreases over time.

This means:

- Energy spreads out

- Systems move toward equilibrium

- Natural processes have a preferred direction

This preferred direction is often called the “arrow of time.”

Entropy and Energy Distribution

Entropy is closely related to how energy is distributed in a system.

When energy is concentrated:

- Fewer microscopic arrangements are possible

- Entropy is lower

When energy spreads out:

- More microscopic arrangements are possible

- Entropy increases

For example:

- A hot object placed in a cold room will cool down.

- Heat spreads from concentrated to dispersed.

- Total entropy increases.

Microscopic View of Entropy

From a statistical perspective, entropy is tied to probability.

A system can be arranged in many microscopic configurations (microstates). The macroscopic state we observe corresponds to a large number of possible microstates.

Important idea:

The more microstates available, the higher the entropy.

For example:

- Gas molecules confined to one side of a box represent fewer microstates.

- Gas evenly distributed represents far more microstates.

- The evenly distributed state is overwhelmingly more probable.

This statistical interpretation explains why entropy increases naturally.

Reversible vs Irreversible Processes

Not all processes are the same in thermodynamics.

Reversible Processes

A reversible process:

- Occurs infinitely slowly

- Maintains equilibrium at every stage

- Can be reversed without leaving changes in surroundings

Reversible processes are idealized models.

Irreversible Processes

An irreversible process:

- Happens spontaneously

- Involves friction, turbulence, or diffusion

- Cannot be reversed without external work

- Increases total entropy

Examples of irreversible processes:

- Mixing of gases

- Heat flow from hot to cold

- Breaking of a glass

- Chemical combustion

Irreversibility defines the direction of real-world processes.

Entropy and Equilibrium

A system reaches thermodynamic equilibrium when entropy is maximized (for isolated systems).

At equilibrium:

- No net flows of energy or matter occur

- Macroscopic properties remain constant

- Entropy is at its maximum possible value

Equilibrium represents the most probable state of the system.

Entropy in Everyday Phenomena

Entropy explains many common observations:

- Ice melts at room temperature.

- Perfume spreads through a room.

- Cream mixes into coffee.

- Mechanical energy converts to heat due to friction.

- Batteries lose usable energy over time.

In each case, energy becomes more dispersed and less available for useful work.

Entropy and the Arrow of Time

One of the most fascinating implications of entropy is its connection to time.

Physical laws at the microscopic level are mostly time-symmetric. However, entropy introduces asymmetry.

Why do we remember the past but not the future?

Because:

- The past had lower entropy.

- The future has higher entropy.

The increase of entropy gives time its direction.

Entropy and Heat Engines

Entropy plays a central role in heat engines and energy systems.

In a heat engine:

- Heat flows from a hot reservoir to a cold reservoir.

- Some energy converts into work.

- The rest increases entropy.

No engine can be 100% efficient because some entropy must always increase.

This is why:

- Perpetual motion machines are impossible.

- Energy transformations always involve losses.

Entropy and Information

Entropy also appears in information theory.

In this context:

- Entropy measures uncertainty.

- Higher entropy = more unpredictability.

- Lower entropy = more structured information.

This connection reveals a deep relationship between thermodynamics and communication systems.

Entropy in the Universe

On a cosmic scale, entropy has enormous implications.

The universe began in a state of very low entropy.

Over time:

- Stars form and burn fuel.

- Energy radiates into space.

- Matter spreads out.

- Entropy increases.

Eventually, the universe may reach a state of maximum entropy, sometimes referred to as “heat death.”

In such a state:

- No temperature differences exist.

- No usable energy remains.

- No work can be performed.

Why Irreversibility Matters

Irreversibility shapes:

- Energy systems

- Climate processes

- Biological evolution

- Chemical reactions

- Engineering design

Understanding entropy allows scientists and engineers to:

- Improve energy efficiency

- Predict system behavior

- Analyze stability

- Model natural processes

Irreversibility is not just a limitation — it is a fundamental feature of reality.

Key Takeaways

- Entropy measures the number of microscopic arrangements in a system.

- The Second Law states that entropy increases in isolated systems.

- Irreversible processes increase total entropy.

- Equilibrium corresponds to maximum entropy.

- Entropy explains the arrow of time.

- Energy transformations always involve entropy increase.

- Entropy connects thermodynamics, probability, and information theory.

Entropy and irreversibility reveal a deep truth: nature evolves toward the most probable configuration, and this evolution defines the direction of time itself.